Django Rest Framework Throttle Race Condition

In a recent project, I used drf's SimpleRateThrottle to implement rate limiting on some views. Curious about the implementation, I reviewed the source, and noticed that the throttler uses the cache backend with a sliding window log algorithm. The high level approach is:

- Generate key for request

- Lookup window in cache by key

- Purge old entries (outside current window)

- Check if window is full

- If so, fail

- Otherwise, insert current timestamp into window

- Set window in cache

- Pass

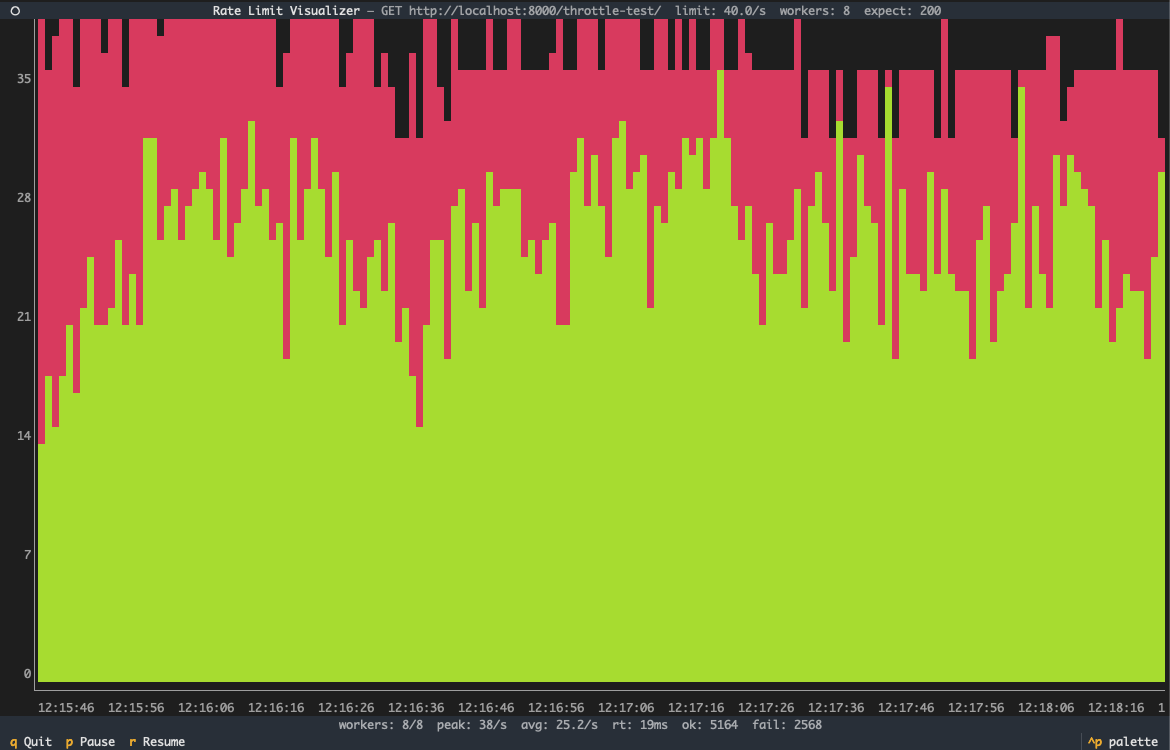

Notice anything? There's a race condition between steps 2 and 7: multiple requests may retrieve the window from the cache in parallel, and later when setting the updated window, the last request will overwrite the other entries. To demonstrate this behavior, I created a small tool to visualize the success rates of requests against a rate limited url:

rlui tests requests against a url for success, over time at a given rate. In the above, it's clear that SimpleRateThrottle is vulnerable to the above race condition, as requests repeatedly exceed the configured limit of 10 req/s: at an input of 40 req/s, the peak success was 38 req/s, with an average success of 24 req/s.

Now, I'm not the first to point this out - it's been reported before. I agree with the maintainers that it's out of scope of drf to provide a perfect solution, as rate limiting algorithms are diverse in their costs and benefits. Out of curiosity, I decided to implement my own rate limiting algorithm, using redis: the same sliding window log algorithm, without the race condition.

Redis provides a sorted set primitive, which maps each set entry to a score, which is used for ranking or sorting. To implement a sliding window log, map each request key to its numeric timestamp. Redis provides a command to cull the sorted set within a given range, perfect for purging entries outside the current window. At a high level:

- Generate key for request

- Purge old entries (outside current window)

- Add current timestamp into window

- Check if window is full

- If so, remove current timestamp from window, and fail

- Pass

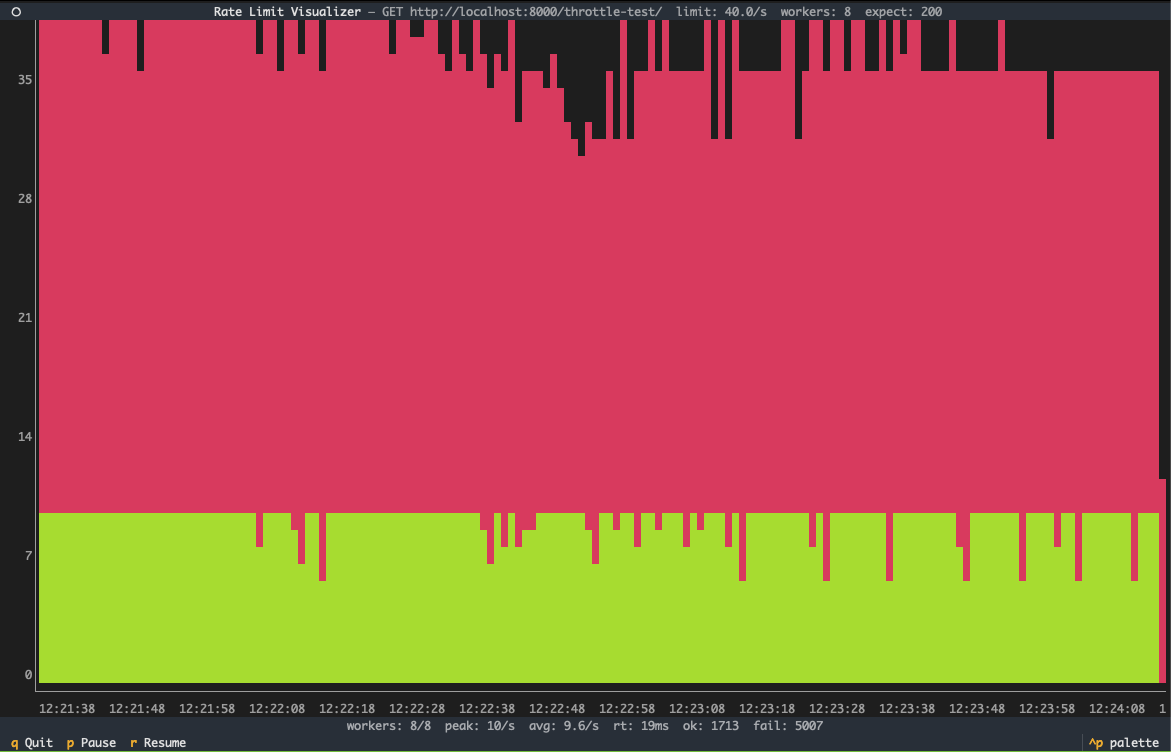

Here's how it runs:

The rate limiter never allows requests above 10 req/s. Voila!

The key difference is that the window updates do not overwrite each other, i.e. entries may be safely added and removed in parallel. The window is therefore not prone to data loss, as in SimpleRateThrottle. In code, it looks like this:

now = self.timer()

req_key = str(now)

pipeline = redisclient.pipeline()

pipeline.zremrangebyscore(self.key, 0, now - self.duration)

pipeline.zadd(self.key, {req_key: now})

pipeline.expire(self.key, self.duration)

pipeline.zcard(self.key)

_, _, _, num_requests_in_window = pipeline.execute()

if num_requests_in_window > self.num_requests:

# remove key, request was not processed and doesnt count

redisclient.zrem(self.key, req_key)

return False

return True

The relevant redis commands are:

- zadd: add an element (and its score) to a sorted set. Used to add requests to the sliding window.

- zcard: measure the cardinality, or size, of the set. Used to count the number of requests in the sliding window.

- zremrangebyscore: removes entries from a set by score. Used to purge old entries in the sliding window, from 0 (beginning of time) to the start of the current window (now - duration).

- expire: expire a key. Used to cleanup the database; after duration seconds, the sliding window may be purged.

Note the use of a pipeline, which is similar to a database transaction: this sends all commands together to be executed atomically. This ensures that the window size is counted correctly, and also prevents crashing midway from preventing expiring the key.

A savvy reader will note that this code has its own race condition: there's a time gap between adding a request to the window and checking the window's size, and later removing the request from the window. If a request comes in and exceeds the window limit, then before that request is removed, a request could come in that should pass, but doesn't, because the window has extra requests. In short, this implementation will reject more requests under heavy pressure, as opposed to allowing them. This could actually be resolved too, but would require a lua script implementing the same logic. Following the drf authors, I wanted a solution that held up, not one that's perfect.

For the full code of the throttler, see this gist.